#python array numpy

Explore tagged Tumblr posts

Text

Python Libraries to Learn Before Tackling Data Analysis

To tackle data analysis effectively in Python, it's crucial to become familiar with several libraries that streamline the process of data manipulation, exploration, and visualization. Here's a breakdown of the essential libraries:

1. NumPy

- Purpose: Numerical computing.

- Why Learn It: NumPy provides support for large multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays efficiently.

- Key Features:

- Fast array processing.

- Mathematical operations on arrays (e.g., sum, mean, standard deviation).

- Linear algebra operations.

2. Pandas

- Purpose: Data manipulation and analysis.

- Why Learn It: Pandas offers data structures like DataFrames, making it easier to handle and analyze structured data.

- Key Features:

- Reading/writing data from CSV, Excel, SQL databases, and more.

- Handling missing data.

- Powerful group-by operations.

- Data filtering and transformation.

3. Matplotlib

- Purpose: Data visualization.

- Why Learn It: Matplotlib is one of the most widely used plotting libraries in Python, allowing for a wide range of static, animated, and interactive plots.

- Key Features:

- Line plots, bar charts, histograms, scatter plots.

- Customizable charts (labels, colors, legends).

- Integration with Pandas for quick plotting.

4. Seaborn

- Purpose: Statistical data visualization.

- Why Learn It: Built on top of Matplotlib, Seaborn simplifies the creation of attractive and informative statistical graphics.

- Key Features:

- High-level interface for drawing attractive statistical graphics.

- Easier to use for complex visualizations like heatmaps, pair plots, etc.

- Visualizations based on categorical data.

5. SciPy

- Purpose: Scientific and technical computing.

- Why Learn It: SciPy builds on NumPy and provides additional functionality for complex mathematical operations and scientific computing.

- Key Features:

- Optimized algorithms for numerical integration, optimization, and more.

- Statistics, signal processing, and linear algebra modules.

6. Scikit-learn

- Purpose: Machine learning and statistical modeling.

- Why Learn It: Scikit-learn provides simple and efficient tools for data mining, analysis, and machine learning.

- Key Features:

- Classification, regression, and clustering algorithms.

- Dimensionality reduction, model selection, and preprocessing utilities.

7. Statsmodels

- Purpose: Statistical analysis.

- Why Learn It: Statsmodels allows users to explore data, estimate statistical models, and perform tests.

- Key Features:

- Linear regression, logistic regression, time series analysis.

- Statistical tests and models for descriptive statistics.

8. Plotly

- Purpose: Interactive data visualization.

- Why Learn It: Plotly allows for the creation of interactive and web-based visualizations, making it ideal for dashboards and presentations.

- Key Features:

- Interactive plots like scatter, line, bar, and 3D plots.

- Easy integration with web frameworks.

- Dashboards and web applications with Dash.

9. TensorFlow/PyTorch (Optional)

- Purpose: Machine learning and deep learning.

- Why Learn It: If your data analysis involves machine learning, these libraries will help in building, training, and deploying deep learning models.

- Key Features:

- Tensor processing and automatic differentiation.

- Building neural networks.

10. Dask (Optional)

- Purpose: Parallel computing for data analysis.

- Why Learn It: Dask enables scalable data manipulation by parallelizing Pandas operations, making it ideal for big datasets.

- Key Features:

- Works with NumPy, Pandas, and Scikit-learn.

- Handles large data and parallel computations easily.

Focusing on NumPy, Pandas, Matplotlib, and Seaborn will set a strong foundation for basic data analysis.

8 notes

·

View notes

Text

https://www.bipamerica.org/data-scientists-toolkit-top-python-libraries

A Data Scientist's toolkit heavily relies on Python libraries to handle data processing, analysis, and modeling. NumPy is essential for numerical computations and array operations, while Pandas provides powerful tools for data manipulation and analysis. Matplotlib and Seaborn are key for data visualization, enabling the creation of insightful charts and graphs.

5 notes

·

View notes

Text

How to do a summation of a series in python (an example)

Here is an example of summation of series in Python:

Python

def summation_of_series(series): """Calculates the summation of a series of numbers. Args: series: A list of numbers. Returns: The summation of the series. """ sum = 0 for number in series: sum += number return sum # Example usage: series = [1, 2, 3, 4, 5] sum = summation_of_series(series) print(sum)

Output:

15

This function can be used to calculate the summation of any series of numbers, regardless of the length of the series.

Here is another example of summation of series in Python, using the numpy library:

Python

import numpy as np def summation_of_series_numpy(series): """Calculates the summation of a series of numbers using the `numpy` library. Args: series: A numpy array of numbers. Returns: The summation of the series. """ sum = np.sum(series) return sum # Example usage: series = np.array([1, 2, 3, 4, 5]) sum = summation_of_series_numpy(series) print(sum)

Output:

15

This function is similar to the previous function, but it uses the numpy library to calculate the summation of the series. This can be more efficient for large series of numbers.

I hope this helps!

#programmer#studyblr#learning to code#python#progblr#coding#kumar's python study notes#codeblr#programming

22 notes

·

View notes

Text

liveblogging my descent into madness over this lab report i’ve been procrastinating because what else can ya do to cope

10:22 PM — got Pandas working to read all my data into Python. do not like working with pd dataframes instead of numpy arrays but when you have 100,000 data points you take what you can get

this mfer is due 1 PM tomorrow so it’s an all-nighter (or near it) for me. rly wish i didn’t live with my (anti-alcohol) parents because i feel like a glass of wine would greatly improve my nerves about this situation but i suppose a honey cinnamon coffee will do

#uncertain ramblings#really should remember that tag exists more often#not everyone is here to hear me groan about my real life lol

9 notes

·

View notes

Text

How much Python should one learn before beginning machine learning?

Before diving into machine learning, a solid understanding of Python is essential. :

Basic Python Knowledge:

Syntax and Data Types:

Understand Python syntax, basic data types (strings, integers, floats), and operations.

Control Structures:

Learn how to use conditionals (if statements), loops (for and while), and list comprehensions.

Data Handling Libraries:

Pandas:

Familiarize yourself with Pandas for data manipulation and analysis. Learn how to handle DataFrames, series, and perform data cleaning and transformations.

NumPy:

Understand NumPy for numerical operations, working with arrays, and performing mathematical computations.

Data Visualization:

Matplotlib and Seaborn:

Learn basic plotting with Matplotlib and Seaborn for visualizing data and understanding trends and distributions.

Basic Programming Concepts:

Functions:

Know how to define and use functions to create reusable code.

File Handling:

Learn how to read from and write to files, which is important for handling datasets.

Basic Statistics:

Descriptive Statistics:

Understand mean, median, mode, standard deviation, and other basic statistical concepts.

Probability:

Basic knowledge of probability is useful for understanding concepts like distributions and statistical tests.

Libraries for Machine Learning:

Scikit-learn:

Get familiar with Scikit-learn for basic machine learning tasks like classification, regression, and clustering. Understand how to use it for training models, evaluating performance, and making predictions.

Hands-on Practice:

Projects:

Work on small projects or Kaggle competitions to apply your Python skills in practical scenarios. This helps in understanding how to preprocess data, train models, and interpret results.

In summary, a good grasp of Python basics, data handling, and basic statistics will prepare you well for starting with machine learning. Hands-on practice with machine learning libraries and projects will further solidify your skills.

To learn more drop the message…!

2 notes

·

View notes

Text

Working on a Python project for weeks and I'm adamant we use Numpy. I'm new to numpy, and my partner knows no Python. I'm convinced this'll be super efficient because Numpy is good.

We can't get Numpy to work, we spend weeks getting everything working and nothing is working.

We end up getting rid of all of it and using standard Python arrays.

Make more progress in one night than we did in weeks.

I was a gigantic asshat for insisting we do something a certain way. Legitimately detrimental to doing what we need to do. Now that the professor and my partner are working on it because this went from a class assignment to a potential research paper. (Worth noting that we couldn't have succeeded without assistance from the professor so he's cool) everything I contributed has been removed at the end of the day except wasting weeks of sleepless nights and it's specifically and unequivocally at my hands.

I feel stupid and asshattish and as if I owe someone something. I'm acting defensive and I shouldn't because they're right when they say that this should be done in the easy straightforward way.

I feel like lashing out and I can't do that. I'm aware I'm in the wrong. I was wrong and I'm angry that it feels like I'm a prick

3 notes

·

View notes

Text

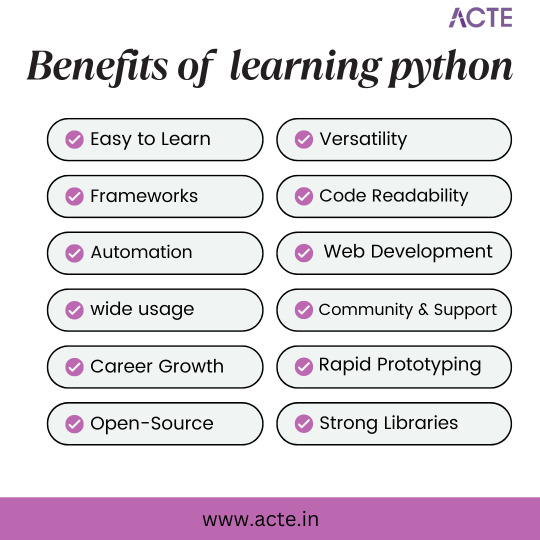

Exploring the Python and Its Incredible Benefits:

Python, a versatile programming language known for its simplicity and adaptability, holds a prominent position in the technological landscape. Originating in the late 1980s, Python has garnered substantial attention due to its user-friendly syntax, making it an accessible choice for individuals at all levels of programming expertise. Notably, Python's design principles prioritize code clarity, empowering developers to articulate their ideas effectively and devise elegant solutions.

Python's applicability spans a multitude of domains, encompassing web development, data analysis, artificial intelligence, and scientific computing, among others. Its rich array of libraries and frameworks enhances efficiency in diverse tasks, including crafting dynamic websites, automating routine processes, processing and interpreting data, and constructing intricate applications.

The confluence of Python's flexibility and robust community support has driven its widespread adoption across varied industries. Whether one is a newcomer or an accomplished programmer, Python constitutes a potent toolset for software development and systematic problem-solving.

The ensuing enumeration underscores the merits of acquainting oneself with Python:

Accessible Learning: Python's straightforward syntax expedites the learning curve, enabling a focus on logical problem-solving rather than grappling with intricate language intricacies.

Versatility in Application: Python's versatility finds expression in applications spanning web development, data analysis, AI, and more, cultivating diverse avenues for career exploration.

Data Insight and Analysis: Python's specialized libraries, such as NumPy and Pandas, empower adept data analysis and visualization, enhancing data-driven decision-making.

AI and Machine Learning Proficiency: Python's repository of libraries, including Scikit-Learn, empowers the creation of sophisticated algorithms and AI models.

Web Development Prowess: Python's frameworks, notably Django, facilitate the swift development of dynamic, secure web applications, underscoring its relevance in modern web environments.

Efficient Prototyping: Python's agile development capabilities facilitate the rapid creation of prototypes and experimental models, fostering innovation.

Community Collaboration: The dynamic Python community serves as a wellspring of resources and support, nurturing an environment of continuous learning and problem resolution.

Varied Career Prospects: Proficiency in Python translates to an array of roles across diverse sectors, reflecting the expanding demand for skilled practitioners.

Cross-Disciplinary Impact: Python's adaptability transcends industries, permeating sectors such as finance, healthcare, e-commerce, and scientific research.

Open-Source Advantage: Python's open-source nature encourages collaboration, fostering ongoing refinement and communal contribution.

Robust Toolset: Python's toolkit simplifies complex tasks and accelerates development, enhancing productivity.

Code Elegance: Python's elegant syntax fosters code legibility, promoting teamwork and fostering shared comprehension.

Professional Advancement: Proficiency in Python translates into promising career advancement opportunities and the potential for competitive compensation.

Future-Proofed Skills: Python's enduring prevalence and versatile utility ensure that acquired skills remain pertinent within evolving technological landscapes.

In summation, Python's stature as a versatile, user-friendly programming language stands as a testament to its enduring relevance. Its impact is palpable across industries, driving innovation and technological progress.

If you want to learn more about Python, feel free to contact ACTE Institution because they offer certifications and job opportunities. Experienced teachers can help you learn better. You can find these services both online and offline. Take things step by step and consider enrolling in a course if you’re interested.

10 notes

·

View notes

Text

How you can use python for data wrangling and analysis

Python is a powerful and versatile programming language that can be used for various purposes, such as web development, data science, machine learning, automation, and more. One of the most popular applications of Python is data analysis, which involves processing, cleaning, manipulating, and visualizing data to gain insights and make decisions.

In this article, we will introduce some of the basic concepts and techniques of data analysis using Python, focusing on the data wrangling and analysis process. Data wrangling is the process of transforming raw data into a more suitable format for analysis, while data analysis is the process of applying statistical methods and tools to explore, summarize, and interpret data.

To perform data wrangling and analysis with Python, we will use two of the most widely used libraries: Pandas and NumPy. Pandas is a library that provides high-performance data structures and operations for manipulating tabular data, such as Series and DataFrame. NumPy is a library that provides fast and efficient numerical computations on multidimensional arrays, such as ndarray.

We will also use some other libraries that are useful for data analysis, such as Matplotlib and Seaborn for data visualization, SciPy for scientific computing, and Scikit-learn for machine learning.

To follow along with this article, you will need to have Python 3.6 or higher installed on your computer, as well as the libraries mentioned above. You can install them using pip or conda commands. You will also need a code editor or an interactive environment, such as Jupyter Notebook or Google Colab.

Let’s get started with some examples of data wrangling and analysis with Python.

Example 1: Analyzing COVID-19 Data

In this example, we will use Python to analyze the COVID-19 data from the World Health Organization (WHO). The data contains the daily situation reports of confirmed cases and deaths by country from January 21, 2020 to October 23, 2023. You can download the data from here.

First, we need to import the libraries that we will use:import pandas as pd import numpy as np import matplotlib.pyplot as plt import seaborn as sns

Next, we need to load the data into a Pandas DataFrame:df = pd.read_csv('WHO-COVID-19-global-data.csv')

We can use the head() method to see the first five rows of the DataFrame:df.head()

Date_reportedCountry_codeCountryWHO_regionNew_casesCumulative_casesNew_deathsCumulative_deaths2020–01–21AFAfghanistanEMRO00002020–01–22AFAfghanistanEMRO00002020–01–23AFAfghanistanEMRO00002020–01–24AFAfghanistanEMRO00002020–01–25AFAfghanistanEMRO0000

We can use the info() method to see some basic information about the DataFrame, such as the number of rows and columns, the data types of each column, and the memory usage:df.info()

Output:

RangeIndex: 163800 entries, 0 to 163799 Data columns (total 8 columns): # Column Non-Null Count Dtype — — — — — — — — — — — — — — — 0 Date_reported 163800 non-null object 1 Country_code 162900 non-null object 2 Country 163800 non-null object 3 WHO_region 163800 non-null object 4 New_cases 163800 non-null int64 5 Cumulative_cases 163800 non-null int64 6 New_deaths 163800 non-null int64 7 Cumulative_deaths 163800 non-null int64 dtypes: int64(4), object(4) memory usage: 10.0+ MB “><class 'pandas.core.frame.DataFrame'> RangeIndex: 163800 entries, 0 to 163799 Data columns (total 8 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 Date_reported 163800 non-null object 1 Country_code 162900 non-null object 2 Country 163800 non-null object 3 WHO_region 163800 non-null object 4 New_cases 163800 non-null int64 5 Cumulative_cases 163800 non-null int64 6 New_deaths 163800 non-null int64 7 Cumulative_deaths 163800 non-null int64 dtypes: int64(4), object(4) memory usage: 10.0+ MB

We can see that there are some missing values in the Country_code column. We can use the isnull() method to check which rows have missing values:df[df.Country_code.isnull()]

Output:

Date_reportedCountry_codeCountryWHO_regionNew_casesCumulative_casesNew_deathsCumulative_deaths2020–01–21NaNInternational conveyance (Diamond Princess)WPRO00002020–01–22NaNInternational conveyance (Diamond Princess)WPRO0000……………………2023–10–22NaNInternational conveyance (Diamond Princess)WPRO07120132023–10–23NaNInternational conveyance (Diamond Princess)WPRO0712013

We can see that the missing values are from the rows that correspond to the International conveyance (Diamond Princess), which is a cruise ship that had a COVID-19 outbreak in early 2020. Since this is not a country, we can either drop these rows or assign them a unique code, such as ‘IC’. For simplicity, we will drop these rows using the dropna() method:df = df.dropna()

We can also check the data types of each column using the dtypes attribute:df.dtypes

Output:Date_reported object Country_code object Country object WHO_region object New_cases int64 Cumulative_cases int64 New_deaths int64 Cumulative_deaths int64 dtype: object

We can see that the Date_reported column is of type object, which means it is stored as a string. However, we want to work with dates as a datetime type, which allows us to perform date-related operations and calculations. We can use the to_datetime() function to convert the column to a datetime type:df.Date_reported = pd.to_datetime(df.Date_reported)

We can also use the describe() method to get some summary statistics of the numerical columns, such as the mean, standard deviation, minimum, maximum, and quartiles:df.describe()

Output:

New_casesCumulative_casesNew_deathsCumulative_deathscount162900.000000162900.000000162900.000000162900.000000mean1138.300062116955.14016023.4867892647.346237std6631.825489665728.383017137.25601215435.833525min-32952.000000–32952.000000–1918.000000–1918.00000025%-1.000000–1.000000–1.000000–1.00000050%-1.000000–1.000000–1.000000–1.00000075%-1.000000–1.000000–1.000000–1.000000max -1 -1 -1 -1

We can see that there are some negative values in the New_cases, Cumulative_cases, New_deaths, and Cumulative_deaths columns, which are likely due to data errors or corrections. We can use the replace() method to replace these values with zero:df = df.replace(-1,0)

Now that we have cleaned and prepared the data, we can start to analyze it and answer some questions, such as:

Which countries have the highest number of cumulative cases and deaths?

How has the pandemic evolved over time in different regions and countries?

What is the current situation of the pandemic in India?

To answer these questions, we will use some of the methods and attributes of Pandas DataFrame, such as:

groupby() : This method allows us to group the data by one or more columns and apply aggregation functions, such as sum, mean, count, etc., to each group.

sort_values() : This method allows us to sort the data by one or more

loc[] : This attribute allows us to select a subset of the data by labels or conditions.

plot() : This method allows us to create various types of plots from the data, such as line, bar, pie, scatter, etc.

If you want to learn Python from scratch must checkout e-Tuitions to learn Python online, They can teach you Python and other coding language also they have some of the best teachers for their students and most important thing you can also Book Free Demo for any class just goo and get your free demo.

#python#coding#programming#programming languages#python tips#python learning#python programming#python development

2 notes

·

View notes

Text

25 Udemy Paid Courses for Free with Certification (Only for Limited Time)

2023 Complete SQL Bootcamp from Zero to Hero in SQL

Become an expert in SQL by learning through concept & Hands-on coding :)

What you'll learn

Use SQL to query a database Be comfortable putting SQL on their resume Replicate real-world situations and query reports Use SQL to perform data analysis Learn to perform GROUP BY statements Model real-world data and generate reports using SQL Learn Oracle SQL by Professionally Designed Content Step by Step! Solve any SQL-related Problems by Yourself Creating Analytical Solutions! Write, Read and Analyze Any SQL Queries Easily and Learn How to Play with Data! Become a Job-Ready SQL Developer by Learning All the Skills You will Need! Write complex SQL statements to query the database and gain critical insight on data Transition from the Very Basics to a Point Where You can Effortlessly Work with Large SQL Queries Learn Advanced Querying Techniques Understand the difference between the INNER JOIN, LEFT/RIGHT OUTER JOIN, and FULL OUTER JOIN Complete SQL statements that use aggregate functions Using joins, return columns from multiple tables in the same query

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Python Programming Complete Beginners Course Bootcamp 2023

2023 Complete Python Bootcamp || Python Beginners to advanced || Python Master Class || Mega Course

What you'll learn

Basics in Python programming Control structures, Containers, Functions & Modules OOPS in Python How python is used in the Space Sciences Working with lists in python Working with strings in python Application of Python in Mars Rovers sent by NASA

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Learn PHP and MySQL for Web Application and Web Development

Unlock the Power of PHP and MySQL: Level Up Your Web Development Skills Today

What you'll learn

Use of PHP Function Use of PHP Variables Use of MySql Use of Database

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

T-Shirt Design for Beginner to Advanced with Adobe Photoshop

Unleash Your Creativity: Master T-Shirt Design from Beginner to Advanced with Adobe Photoshop

What you'll learn

Function of Adobe Photoshop Tools of Adobe Photoshop T-Shirt Design Fundamentals T-Shirt Design Projects

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Complete Data Science BootCamp

Learn about Data Science, Machine Learning and Deep Learning and build 5 different projects.

What you'll learn

Learn about Libraries like Pandas and Numpy which are heavily used in Data Science. Build Impactful visualizations and charts using Matplotlib and Seaborn. Learn about Machine Learning LifeCycle and different ML algorithms and their implementation in sklearn. Learn about Deep Learning and Neural Networks with TensorFlow and Keras Build 5 complete projects based on the concepts covered in the course.

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Essentials User Experience Design Adobe XD UI UX Design

Learn UI Design, User Interface, User Experience design, UX design & Web Design

What you'll learn

How to become a UX designer Become a UI designer Full website design All the techniques used by UX professionals

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Build a Custom E-Commerce Site in React + JavaScript Basics

Build a Fully Customized E-Commerce Site with Product Categories, Shopping Cart, and Checkout Page in React.

What you'll learn

Introduction to the Document Object Model (DOM) The Foundations of JavaScript JavaScript Arithmetic Operations Working with Arrays, Functions, and Loops in JavaScript JavaScript Variables, Events, and Objects JavaScript Hands-On - Build a Photo Gallery and Background Color Changer Foundations of React How to Scaffold an Existing React Project Introduction to JSON Server Styling an E-Commerce Store in React and Building out the Shop Categories Introduction to Fetch API and React Router The concept of "Context" in React Building a Search Feature in React Validating Forms in React

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Complete Bootstrap & React Bootcamp with Hands-On Projects

Learn to Build Responsive, Interactive Web Apps using Bootstrap and React.

What you'll learn

Learn the Bootstrap Grid System Learn to work with Bootstrap Three Column Layouts Learn to Build Bootstrap Navigation Components Learn to Style Images using Bootstrap Build Advanced, Responsive Menus using Bootstrap Build Stunning Layouts using Bootstrap Themes Learn the Foundations of React Work with JSX, and Functional Components in React Build a Calculator in React Learn the React State Hook Debug React Projects Learn to Style React Components Build a Single and Multi-Player Connect-4 Clone with AI Learn React Lifecycle Events Learn React Conditional Rendering Build a Fully Custom E-Commerce Site in React Learn the Foundations of JSON Server Work with React Router

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Build an Amazon Affiliate E-Commerce Store from Scratch

Earn Passive Income by Building an Amazon Affiliate E-Commerce Store using WordPress, WooCommerce, WooZone, & Elementor

What you'll learn

Registering a Domain Name & Setting up Hosting Installing WordPress CMS on Your Hosting Account Navigating the WordPress Interface The Advantages of WordPress Securing a WordPress Installation with an SSL Certificate Installing Custom Themes for WordPress Installing WooCommerce, Elementor, & WooZone Plugins Creating an Amazon Affiliate Account Importing Products from Amazon to an E-Commerce Store using WooZone Plugin Building a Customized Shop with Menu's, Headers, Branding, & Sidebars Building WordPress Pages, such as Blogs, About Pages, and Contact Us Forms Customizing Product Pages on a WordPress Power E-Commerce Site Generating Traffic and Sales for Your Newly Published Amazon Affiliate Store

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

The Complete Beginner Course to Optimizing ChatGPT for Work

Learn how to make the most of ChatGPT's capabilities in efficiently aiding you with your tasks.

What you'll learn

Learn how to harness ChatGPT's functionalities to efficiently assist you in various tasks, maximizing productivity and effectiveness. Delve into the captivating fusion of product development and SEO, discovering effective strategies to identify challenges, create innovative tools, and expertly Understand how ChatGPT is a technological leap, akin to the impact of iconic tools like Photoshop and Excel, and how it can revolutionize work methodologies thr Showcase your learning by creating a transformative project, optimizing your approach to work by identifying tasks that can be streamlined with artificial intel

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

AWS, JavaScript, React | Deploy Web Apps on the Cloud

Cloud Computing | Linux Foundations | LAMP Stack | DBMS | Apache | NGINX | AWS IAM | Amazon EC2 | JavaScript | React

What you'll learn

Foundations of Cloud Computing on AWS and Linode Cloud Computing Service Models (IaaS, PaaS, SaaS) Deploying and Configuring a Virtual Instance on Linode and AWS Secure Remote Administration for Virtual Instances using SSH Working with SSH Key Pair Authentication The Foundations of Linux (Maintenance, Directory Commands, User Accounts, Filesystem) The Foundations of Web Servers (NGINX vs Apache) Foundations of Databases (SQL vs NoSQL), Database Transaction Standards (ACID vs CAP) Key Terminology for Full Stack Development and Cloud Administration Installing and Configuring LAMP Stack on Ubuntu (Linux, Apache, MariaDB, PHP) Server Security Foundations (Network vs Hosted Firewalls). Horizontal and Vertical Scaling of a virtual instance on Linode using NodeBalancers Creating Manual and Automated Server Images and Backups on Linode Understanding the Cloud Computing Phenomenon as Applicable to AWS The Characteristics of Cloud Computing as Applicable to AWS Cloud Deployment Models (Private, Community, Hybrid, VPC) Foundations of AWS (Registration, Global vs Regional Services, Billing Alerts, MFA) AWS Identity and Access Management (Mechanics, Users, Groups, Policies, Roles) Amazon Elastic Compute Cloud (EC2) - (AMIs, EC2 Users, Deployment, Elastic IP, Security Groups, Remote Admin) Foundations of the Document Object Model (DOM) Manipulating the DOM Foundations of JavaScript Coding (Variables, Objects, Functions, Loops, Arrays, Events) Foundations of ReactJS (Code Pen, JSX, Components, Props, Events, State Hook, Debugging) Intermediate React (Passing Props, Destrcuting, Styling, Key Property, AI, Conditional Rendering, Deployment) Building a Fully Customized E-Commerce Site in React Intermediate React Concepts (JSON Server, Fetch API, React Router, Styled Components, Refactoring, UseContext Hook, UseReducer, Form Validation)

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Run Multiple Sites on a Cloud Server: AWS & Digital Ocean

Server Deployment | Apache Configuration | MySQL | PHP | Virtual Hosts | NS Records | DNS | AWS Foundations | EC2

What you'll learn

A solid understanding of the fundamentals of remote server deployment and configuration, including network configuration and security. The ability to install and configure the LAMP stack, including the Apache web server, MySQL database server, and PHP scripting language. Expertise in hosting multiple domains on one virtual server, including setting up virtual hosts and managing domain names. Proficiency in virtual host file configuration, including creating and configuring virtual host files and understanding various directives and parameters. Mastery in DNS zone file configuration, including creating and managing DNS zone files and understanding various record types and their uses. A thorough understanding of AWS foundations, including the AWS global infrastructure, key AWS services, and features. A deep understanding of Amazon Elastic Compute Cloud (EC2) foundations, including creating and managing instances, configuring security groups, and networking. The ability to troubleshoot common issues related to remote server deployment, LAMP stack installation and configuration, virtual host file configuration, and D An understanding of best practices for remote server deployment and configuration, including security considerations and optimization for performance. Practical experience in working with remote servers and cloud-based solutions through hands-on labs and exercises. The ability to apply the knowledge gained from the course to real-world scenarios and challenges faced in the field of web hosting and cloud computing. A competitive edge in the job market, with the ability to pursue career opportunities in web hosting and cloud computing.

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Cloud-Powered Web App Development with AWS and PHP

AWS Foundations | IAM | Amazon EC2 | Load Balancing | Auto-Scaling Groups | Route 53 | PHP | MySQL | App Deployment

What you'll learn

Understanding of cloud computing and Amazon Web Services (AWS) Proficiency in creating and configuring AWS accounts and environments Knowledge of AWS pricing and billing models Mastery of Identity and Access Management (IAM) policies and permissions Ability to launch and configure Elastic Compute Cloud (EC2) instances Familiarity with security groups, key pairs, and Elastic IP addresses Competency in using AWS storage services, such as Elastic Block Store (EBS) and Simple Storage Service (S3) Expertise in creating and using Elastic Load Balancers (ELB) and Auto Scaling Groups (ASG) for load balancing and scaling web applications Knowledge of DNS management using Route 53 Proficiency in PHP programming language fundamentals Ability to interact with databases using PHP and execute SQL queries Understanding of PHP security best practices, including SQL injection prevention and user authentication Ability to design and implement a database schema for a web application Mastery of PHP scripting to interact with a database and implement user authentication using sessions and cookies Competency in creating a simple blog interface using HTML and CSS and protecting the blog content using PHP authentication. Students will gain practical experience in creating and deploying a member-only blog with user authentication using PHP and MySQL on AWS.

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

CSS, Bootstrap, JavaScript And PHP Stack Complete Course

CSS, Bootstrap And JavaScript And PHP Complete Frontend and Backend Course

What you'll learn

Introduction to Frontend and Backend technologies Introduction to CSS, Bootstrap And JavaScript concepts, PHP Programming Language Practically Getting Started With CSS Styles, CSS 2D Transform, CSS 3D Transform Bootstrap Crash course with bootstrap concepts Bootstrap Grid system,Forms, Badges And Alerts Getting Started With Javascript Variables,Values and Data Types, Operators and Operands Write JavaScript scripts and Gain knowledge in regard to general javaScript programming concepts PHP Section Introduction to PHP, Various Operator types , PHP Arrays, PHP Conditional statements Getting Started with PHP Function Statements And PHP Decision Making PHP 7 concepts PHP CSPRNG And PHP Scalar Declaration

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Learn HTML - For Beginners

Lean how to create web pages using HTML

What you'll learn

How to Code in HTML Structure of an HTML Page Text Formatting in HTML Embedding Videos Creating Links Anchor Tags Tables & Nested Tables Building Forms Embedding Iframes Inserting Images

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Learn Bootstrap - For Beginners

Learn to create mobile-responsive web pages using Bootstrap

What you'll learn

Bootstrap Page Structure Bootstrap Grid System Bootstrap Layouts Bootstrap Typography Styling Images Bootstrap Tables, Buttons, Badges, & Progress Bars Bootstrap Pagination Bootstrap Panels Bootstrap Menus & Navigation Bars Bootstrap Carousel & Modals Bootstrap Scrollspy Bootstrap Themes

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

JavaScript, Bootstrap, & PHP - Certification for Beginners

A Comprehensive Guide for Beginners interested in learning JavaScript, Bootstrap, & PHP

What you'll learn

Master Client-Side and Server-Side Interactivity using JavaScript, Bootstrap, & PHP Learn to create mobile responsive webpages using Bootstrap Learn to create client and server-side validated input forms Learn to interact with a MySQL Database using PHP

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Linode: Build and Deploy Responsive Websites on the Cloud

Cloud Computing | IaaS | Linux Foundations | Apache + DBMS | LAMP Stack | Server Security | Backups | HTML | CSS

What you'll learn

Understand the fundamental concepts and benefits of Cloud Computing and its service models. Learn how to create, configure, and manage virtual servers in the cloud using Linode. Understand the basic concepts of Linux operating system, including file system structure, command-line interface, and basic Linux commands. Learn how to manage users and permissions, configure network settings, and use package managers in Linux. Learn about the basic concepts of web servers, including Apache and Nginx, and databases such as MySQL and MariaDB. Learn how to install and configure web servers and databases on Linux servers. Learn how to install and configure LAMP stack to set up a web server and database for hosting dynamic websites and web applications. Understand server security concepts such as firewalls, access control, and SSL certificates. Learn how to secure servers using firewalls, manage user access, and configure SSL certificates for secure communication. Learn how to scale servers to handle increasing traffic and load. Learn about load balancing, clustering, and auto-scaling techniques. Learn how to create and manage server images. Understand the basic structure and syntax of HTML, including tags, attributes, and elements. Understand how to apply CSS styles to HTML elements, create layouts, and use CSS frameworks.

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

PHP & MySQL - Certification Course for Beginners

Learn to Build Database Driven Web Applications using PHP & MySQL

What you'll learn

PHP Variables, Syntax, Variable Scope, Keywords Echo vs. Print and Data Output PHP Strings, Constants, Operators PHP Conditional Statements PHP Elseif, Switch, Statements PHP Loops - While, For PHP Functions PHP Arrays, Multidimensional Arrays, Sorting Arrays Working with Forms - Post vs. Get PHP Server Side - Form Validation Creating MySQL Databases Database Administration with PhpMyAdmin Administering Database Users, and Defining User Roles SQL Statements - Select, Where, And, Or, Insert, Get Last ID MySQL Prepared Statements and Multiple Record Insertion PHP Isset MySQL - Updating Records

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Linode: Deploy Scalable React Web Apps on the Cloud

Cloud Computing | IaaS | Server Configuration | Linux Foundations | Database Servers | LAMP Stack | Server Security

What you'll learn

Introduction to Cloud Computing Cloud Computing Service Models (IaaS, PaaS, SaaS) Cloud Server Deployment and Configuration (TFA, SSH) Linux Foundations (File System, Commands, User Accounts) Web Server Foundations (NGINX vs Apache, SQL vs NoSQL, Key Terms) LAMP Stack Installation and Configuration (Linux, Apache, MariaDB, PHP) Server Security (Software & Hardware Firewall Configuration) Server Scaling (Vertical vs Horizontal Scaling, IP Swaps, Load Balancers) React Foundations (Setup) Building a Calculator in React (Code Pen, JSX, Components, Props, Events, State Hook) Building a Connect-4 Clone in React (Passing Arguments, Styling, Callbacks, Key Property) Building an E-Commerce Site in React (JSON Server, Fetch API, Refactoring)

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Internet and Web Development Fundamentals

Learn how the Internet Works and Setup a Testing & Production Web Server

What you'll learn

How the Internet Works Internet Protocols (HTTP, HTTPS, SMTP) The Web Development Process Planning a Web Application Types of Web Hosting (Shared, Dedicated, VPS, Cloud) Domain Name Registration and Administration Nameserver Configuration Deploying a Testing Server using WAMP & MAMP Deploying a Production Server on Linode, Digital Ocean, or AWS Executing Server Commands through a Command Console Server Configuration on Ubuntu Remote Desktop Connection and VNC SSH Server Authentication FTP Client Installation FTP Uploading

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Linode: Web Server and Database Foundations

Cloud Computing | Instance Deployment and Config | Apache | NGINX | Database Management Systems (DBMS)

What you'll learn

Introduction to Cloud Computing (Cloud Service Models) Navigating the Linode Cloud Interface Remote Administration using PuTTY, Terminal, SSH Foundations of Web Servers (Apache vs. NGINX) SQL vs NoSQL Databases Database Transaction Standards (ACID vs. CAP Theorem) Key Terms relevant to Cloud Computing, Web Servers, and Database Systems

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Java Training Complete Course 2022

Learn Java Programming language with Java Complete Training Course 2022 for Beginners

What you'll learn

You will learn how to write a complete Java program that takes user input, processes and outputs the results You will learn OOPS concepts in Java You will learn java concepts such as console output, Java Variables and Data Types, Java Operators And more You will be able to use Java for Selenium in testing and development

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Learn To Create AI Assistant (JARVIS) With Python

How To Create AI Assistant (JARVIS) With Python Like the One from Marvel's Iron Man Movie

What you'll learn

how to create an personalized artificial intelligence assistant how to create JARVIS AI how to create ai assistant

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

Keyword Research, Free Backlinks, Improve SEO -Long Tail Pro

LongTailPro is the keyword research service we at Coursenvy use for ALL our clients! In this course, find SEO keywords,

What you'll learn

Learn everything Long Tail Pro has to offer from A to Z! Optimize keywords in your page/post titles, meta descriptions, social media bios, article content, and more! Create content that caters to the NEW Search Engine Algorithms and find endless keywords to rank for in ALL the search engines! Learn how to use ALL of the top-rated Keyword Research software online! Master analyzing your COMPETITIONS Keywords! Get High-Quality Backlinks that will ACTUALLY Help your Page Rank!

Enroll Now 👇👇👇👇👇👇👇 https://www.book-somahar.com/2023/10/25-udemy-paid-courses-for-free-with.html

#udemy#free course#paid course for free#design#development#ux ui#xd#figma#web development#python#javascript#php#java#cloud

2 notes

·

View notes

Text

The Best Open-Source Tools for Data Science in 2025

Data science in 2025 is thriving, driven by a robust ecosystem of open-source tools that empower professionals to extract insights, build predictive models, and deploy data-driven solutions at scale. This year, the landscape is more dynamic than ever, with established favorites and emerging contenders shaping how data scientists work. Here’s an in-depth look at the best open-source tools that are defining data science in 2025.

1. Python: The Universal Language of Data Science

Python remains the cornerstone of data science. Its intuitive syntax, extensive libraries, and active community make it the go-to language for everything from data wrangling to deep learning. Libraries such as NumPy and Pandas streamline numerical computations and data manipulation, while scikit-learn is the gold standard for classical machine learning tasks.

NumPy: Efficient array operations and mathematical functions.

Pandas: Powerful data structures (DataFrames) for cleaning, transforming, and analyzing structured data.

scikit-learn: Comprehensive suite for classification, regression, clustering, and model evaluation.

Python’s popularity is reflected in the 2025 Stack Overflow Developer Survey, with 53% of developers using it for data projects.

2. R and RStudio: Statistical Powerhouses

R continues to shine in academia and industries where statistical rigor is paramount. The RStudio IDE enhances productivity with features for scripting, debugging, and visualization. R’s package ecosystem—especially tidyverse for data manipulation and ggplot2 for visualization—remains unmatched for statistical analysis and custom plotting.

Shiny: Build interactive web applications directly from R.

CRAN: Over 18,000 packages for every conceivable statistical need.

R is favored by 36% of users, especially for advanced analytics and research.

3. Jupyter Notebooks and JupyterLab: Interactive Exploration

Jupyter Notebooks are indispensable for prototyping, sharing, and documenting data science workflows. They support live code (Python, R, Julia, and more), visualizations, and narrative text in a single document. JupyterLab, the next-generation interface, offers enhanced collaboration and modularity.

Over 15 million notebooks hosted as of 2025, with 80% of data analysts using them regularly.

4. Apache Spark: Big Data at Lightning Speed

As data volumes grow, Apache Spark stands out for its ability to process massive datasets rapidly, both in batch and real-time. Spark’s distributed architecture, support for SQL, machine learning (MLlib), and compatibility with Python, R, Scala, and Java make it a staple for big data analytics.

65% increase in Spark adoption since 2023, reflecting its scalability and performance.

5. TensorFlow and PyTorch: Deep Learning Titans

For machine learning and AI, TensorFlow and PyTorch dominate. Both offer flexible APIs for building and training neural networks, with strong community support and integration with cloud platforms.

TensorFlow: Preferred for production-grade models and scalability; used by over 33% of ML professionals.

PyTorch: Valued for its dynamic computation graph and ease of experimentation, especially in research settings.

6. Data Visualization: Plotly, D3.js, and Apache Superset

Effective data storytelling relies on compelling visualizations:

Plotly: Python-based, supports interactive and publication-quality charts; easy for both static and dynamic visualizations.

D3.js: JavaScript library for highly customizable, web-based visualizations; ideal for specialists seeking full control.

Apache Superset: Open-source dashboarding platform for interactive, scalable visual analytics; increasingly adopted for enterprise BI.

Tableau Public, though not fully open-source, is also popular for sharing interactive visualizations with a broad audience.

7. Pandas: The Data Wrangling Workhorse

Pandas remains the backbone of data manipulation in Python, powering up to 90% of data wrangling tasks. Its DataFrame structure simplifies complex operations, making it essential for cleaning, transforming, and analyzing large datasets.

8. Scikit-learn: Machine Learning Made Simple

scikit-learn is the default choice for classical machine learning. Its consistent API, extensive documentation, and wide range of algorithms make it ideal for tasks such as classification, regression, clustering, and model validation.

9. Apache Airflow: Workflow Orchestration

As data pipelines become more complex, Apache Airflow has emerged as the go-to tool for workflow automation and orchestration. Its user-friendly interface and scalability have driven a 35% surge in adoption among data engineers in the past year.

10. MLflow: Model Management and Experiment Tracking

MLflow streamlines the machine learning lifecycle, offering tools for experiment tracking, model packaging, and deployment. Over 60% of ML engineers use MLflow for its integration capabilities and ease of use in production environments.

11. Docker and Kubernetes: Reproducibility and Scalability

Containerization with Docker and orchestration via Kubernetes ensure that data science applications run consistently across environments. These tools are now standard for deploying models and scaling data-driven services in production.

12. Emerging Contenders: Streamlit and More

Streamlit: Rapidly build and deploy interactive data apps with minimal code, gaining popularity for internal dashboards and quick prototypes.

Redash: SQL-based visualization and dashboarding tool, ideal for teams needing quick insights from databases.

Kibana: Real-time data exploration and monitoring, especially for log analytics and anomaly detection.

Conclusion: The Open-Source Advantage in 2025

Open-source tools continue to drive innovation in data science, making advanced analytics accessible, scalable, and collaborative. Mastery of these tools is not just a technical advantage—it’s essential for staying competitive in a rapidly evolving field. Whether you’re a beginner or a seasoned professional, leveraging this ecosystem will unlock new possibilities and accelerate your journey from raw data to actionable insight.

The future of data science is open, and in 2025, these tools are your ticket to building smarter, faster, and more impactful solutions.

#python#r#rstudio#jupyternotebook#jupyterlab#apachespark#tensorflow#pytorch#plotly#d3js#apachesuperset#pandas#scikitlearn#apacheairflow#mlflow#docker#kubernetes#streamlit#redash#kibana#nschool academy#datascience

0 notes

Text

Python for Data Science: What You Need to Know

Data is at the heart of every modern business decision, and Python is the tool that helps professionals make sense of it. Whether you're analyzing trends, building predictive models, or cleaning datasets, Python offers the simplicity and power needed to get the job done. If you're aiming for a career in this high-demand field, enrolling in the best python training in Hyderabad can help you master the language and its data science applications effectively.

Why Python is Perfect for Data Science

The Python programming language has become the language of choice for data science, and for good reason.. It’s easy to learn, highly readable, and has a massive community supporting it. Whether you’re a beginner or someone with a non-technical background, Python’s clean syntax allows you to focus more on problem-solving rather than worrying about complex code structures.

Must-Know Python Libraries for Data Science

To work efficiently in data science, you’ll need to get comfortable with several powerful Python libraries:

NumPy – Calculations and array operations based on numerical data.

Pandas – for working with structured data like tables and CSV files.

For creating charts and visualizing data patterns, use Matplotlib and Seaborn.

Scikit-learn – for implementing machine learning algorithms.

TensorFlow or PyTorch – for deep learning projects.

Data science workflows depend on these libraries and are essential to success.

Core Skills Every Data Scientist Needs

Learning Python is just the beginning. A successful data scientist also needs to:

Clean and prepare raw data (data wrangling).

Analyze data using statistics and visualizations.

Build, train, and test machine learning models.

Communicate findings through clear reports and dashboards.

Practicing these skills on real-world datasets will help you gain practical experience that employers value.

How to Get Started the Right Way

There are countless tutorials online, but a structured training program gives you a clearer path to success. The right course will cover everything from Python basics to advanced machine learning, including projects, assignments, and mentor support. This kind of guided learning builds both your confidence and your portfolio.

Conclusion: Learn Python for Data Science at SSSIT

Python is the backbone of data science, and knowing how to use it can unlock exciting career opportunities in AI, analytics, and more. You don't have to figure everything out on your own. Join a professional course that offers step-by-step learning, real-time projects, and expert mentoring. For a future-proof start, enroll at SSSIT Computer Education, known for offering the best python training in Hyderabad. Your data science journey starts here!

#best python training in hyderabad#best python training in kukatpally#best python training in KPHB#Best python training institute in Hyderabad

0 notes

Text

Financial Modeling in the Age of AI: Skills Every Investment Banker Needs in 2025

In 2025, the landscape of financial modeling is undergoing a profound transformation. What was once a painstaking, spreadsheet-heavy process is now being reshaped by Artificial Intelligence (AI) and machine learning tools that automate calculations, generate predictive insights, and even draft investment memos.

But here's the truth: AI isn't replacing investment bankers—it's reshaping what they do.

To stay ahead in this rapidly evolving environment, professionals must go beyond traditional Excel skills and learn how to collaborate with AI. Whether you're a finance student, an aspiring analyst, or a working professional looking to upskill, mastering AI-augmented financial modeling is essential. And one of the best ways to do that is by enrolling in a hands-on, industry-relevant investment banking course in Chennai.

What is Financial Modeling, and Why Does It Matter?

Financial modeling is the art and science of creating representations of a company's financial performance. These models are crucial for:

Valuing companies (e.g., through DCF or comparable company analysis)

Making investment decisions

Forecasting growth and profitability

Evaluating mergers, acquisitions, or IPOs

Traditionally built in Excel, models used to take hours—or days—to build and test. Today, AI-powered assistants can build basic frameworks in minutes.

How AI Is Revolutionizing Financial Modeling

The impact of AI on financial modeling is nothing short of revolutionary:

1. Automated Data Gathering and Cleaning

AI tools can automatically extract financial data from balance sheets, income statements, or even PDFs—eliminating hours of manual entry.

2. AI-Powered Forecasting

Machine learning algorithms can analyze historical trends and provide data-driven forecasts far more quickly and accurately than static models.

3. Instant Model Generation

AI assistants like ChatGPT with code interpreters, or Excel’s new Copilot feature, can now generate model templates (e.g., LBO, DCF) instantly, letting analysts focus on insights rather than formulas.

4. Scenario Analysis and Sensitivity Testing

With AI, you can generate multiple scenarios—best case, worst case, expected case—in seconds. These tools can even flag risks and assumptions automatically.

However, the human role isn't disappearing. Investment bankers are still needed to define model logic, interpret results, evaluate market sentiment, and craft the narrative behind the numbers.

What AI Can’t Do (Yet): The Human Advantage

Despite all the hype, AI still lacks:

Business intuition

Ethical judgment

Client understanding

Strategic communication skills

This means future investment bankers need a hybrid skill set—equally comfortable with financial principles and modern tools.

Essential Financial Modeling Skills for 2025 and Beyond

Here are the most in-demand skills every investment banker needs today:

1. Excel + AI Tool Proficiency

Excel isn’t going anywhere, but it’s getting smarter. Learn to use AI-enhanced functions, dynamic arrays, macros, and Copilot features for rapid modeling.

2. Python and SQL

Python libraries like Pandas, NumPy, and Scikit-learn are used for custom forecasting and data analysis. SQL is crucial for pulling financial data from large databases.

3. Data Visualization

Tools like Power BI, Tableau, and Excel dashboards help communicate results effectively.

4. Valuation Techniques

DCF, LBO, M&A models, and comparable company analysis remain core to investment banking.

5. AI Integration and Prompt Engineering

Knowing how to interact with AI (e.g., writing effective prompts for ChatGPT to generate model logic) is a power skill in 2025.

Why Enroll in an Investment Banking Course in Chennai?

As AI transforms finance, the demand for skilled professionals who can use technology without losing touch with core finance principles is soaring.

If you're based in South India, enrolling in an investment banking course in Chennai can set you on the path to success. Here's why:

✅ Hands-on Training

Courses now include live financial modeling projects, AI-assisted model-building, and exposure to industry-standard tools.

✅ Expert Mentors

Learn from professionals who’ve worked in top global banks, PE firms, and consultancies.

✅ Placement Support

With Chennai growing as a finance and tech hub, top employers are hiring from local programs offering real-world skills.

✅ Industry Relevance

The best courses in Chennai combine finance, analytics, and AI—helping you become job-ready in the modern investment banking world.

Whether you're a student, working professional, or career switcher, investing in the right course today can prepare you for the next decade of finance.

Case Study: Using AI in a DCF Model

Imagine you're evaluating a tech startup for acquisition. Traditionally, you’d:

Download financials

Project revenue growth

Build a 5-year forecast

Calculate terminal value

Discount cash flows

With AI tools:

Financials are extracted via OCR and organized automatically.

Forecast assumptions are suggested based on industry data.

Scenario-based DCF models are generated in minutes.

You spend your time refining assumptions and crafting the investment story.

This is what the future of financial modeling looks like—and why upskilling is critical.

Final Thoughts: Evolve or Be Left Behind

AI isn’t the end of financial modeling—it’s the beginning of a new era. In this future, the best investment bankers are not just Excel wizards—they’re strategic thinkers, storytellers, and tech-powered analysts.

By embracing this change and mastering modern modeling skills, you can future-proof your finance career.

And if you're serious about making that leap, enrolling in an investment banking course in Chennai can provide the training, exposure, and credibility to help you rise in the AI age.

0 notes

Text

How Python Can Be Used in Finance: Applications, Benefits & Real-World Examples

In the rapidly evolving world of finance, staying ahead of the curve is essential. One of the most powerful tools at the intersection of technology and finance today is Python. Known for its simplicity and versatility, Python has become a go-to programming language for financial professionals, data scientists, and fintech companies alike.

This blog explores how Python is used in finance, the benefits it offers, and real-world examples of its applications in the industry.

Why Python in Finance?

Python stands out in the finance world because of its:

Ease of use: Simple syntax makes it accessible to professionals from non-programming backgrounds.

Rich libraries: Packages like Pandas, NumPy, Matplotlib, Scikit-learn, and PyAlgoTrade support a wide array of financial tasks.

Community support: A vast, active user base means better resources, tutorials, and troubleshooting help.

Integration: Easily interfaces with databases, Excel, web APIs, and other tools used in finance.

Key Applications of Python in Finance

1. Data Analysis & Visualization

Financial analysis relies heavily on large datasets. Python’s libraries like Pandas and NumPy are ideal for:

Time-series analysis

Portfolio analysis

Risk assessment

Cleaning and processing financial data

Visualization tools like Matplotlib, Seaborn, and Plotly allow users to create interactive charts and dashboards.

2. Algorithmic Trading

Python is a favorite among algo traders due to its speed and ease of prototyping.

Backtesting strategies using libraries like Backtrader and Zipline

Live trading integration with brokers via APIs (e.g., Alpaca, Interactive Brokers)

Strategy optimization using historical data

3. Risk Management & Analytics

With Python, financial institutions can simulate market scenarios and model risk using:

Monte Carlo simulations

Value at Risk (VaR) models

Stress testing

These help firms manage exposure and regulatory compliance.

4. Financial Modeling & Forecasting

Python can be used to build predictive models for:

Stock price forecasting

Credit scoring

Loan default prediction

Scikit-learn, TensorFlow, and XGBoost are popular libraries for machine learning applications in finance.

5. Web Scraping & Sentiment Analysis

Real-time data from financial news, social media, and websites can be scraped using BeautifulSoup and Scrapy. Python’s NLP tools (like NLTK, spaCy, and TextBlob) can be used for sentiment analysis to gauge market sentiment and inform trading strategies.

Benefits of Using Python in Finance

✅ Fast Development

Python allows for quick development and iteration of ideas, which is crucial in a dynamic industry like finance.

✅ Cost-Effective

As an open-source language, Python reduces licensing and development costs.

✅ Customization

Python empowers teams to build tailored solutions that fit specific financial workflows or trading strategies.

✅ Scalability

From small analytics scripts to large-scale trading platforms, Python can handle applications of various complexities.

Real-World Examples

💡 JPMorgan Chase

Developed a proprietary Python-based platform called Athena to manage risk, pricing, and trading across its investment banking operations.

💡 Quantopian (acquired by Robinhood)

Used Python for developing and backtesting trading algorithms. Users could write Python code to create and test strategies on historical market data.

💡 BlackRock

Utilizes Python for data analytics and risk management to support investment decisions across its portfolio.

💡 Robinhood

Leverages Python for backend services, data pipelines, and fraud detection algorithms.

Getting Started with Python in Finance

Want to get your hands dirty? Here are a few resources:

Books:

Python for Finance by Yves Hilpisch

Machine Learning for Asset Managers by Marcos López de Prado

Online Courses:

Coursera: Python and Statistics for Financial Analysis

Udemy: Python for Financial Analysis and Algorithmic Trading

Practice Platforms:

QuantConnect

Alpaca

Interactive Brokers API

Final Thoughts

Python is transforming the financial industry by providing powerful tools to analyze data, build models, and automate trading. Whether you're a finance student, a data analyst, or a hedge fund quant, learning Python opens up a world of possibilities.

As finance becomes increasingly data-driven, Python will continue to be a key differentiator in gaining insights and making informed decisions.

Do you work in finance or aspire to? Want help building your first Python financial model? Let me know, and I’d be happy to help!

#outfit#branding#financial services#investment#finance#financial advisor#financial planning#financial wellness#financial freedom#fintech

0 notes

Text

Learn how to use NumPy in Python with this simple tutorial. Understand arrays, mathematical functions, and data handling easily. Perfect for beginners starting with Python data science.

0 notes

Text

Python for Data Science: The Only Guide You Need to Get Started in 2025

Data is the lifeblood of modern business, powering decisions in healthcare, finance, marketing, sports, and more. And at the core of it all lies a powerful and beginner-friendly programming language — Python.

Whether you’re an aspiring data scientist, analyst, or tech enthusiast, learning Python for data science is one of the smartest career moves you can make in 2025.

In this guide, you’ll learn:

Why Python is the preferred language for data science

The libraries and tools you must master

A beginner-friendly roadmap

How to get started with a free full course on YouTube

Why Python is the #1 Language for Data Science

Python has earned its reputation as the go-to language for data science and here's why:

1. Easy to Learn, Easy to Use

Python’s syntax is clean, simple, and intuitive. You can focus on solving problems rather than struggling with the language itself.

2. Rich Ecosystem of Libraries

Python offers thousands of specialized libraries for data analysis, machine learning, and visualization.

3. Community and Resources

With a vibrant global community, you’ll never run out of tutorials, forums, or project ideas to help you grow.

4. Integration with Tools & Platforms

From Jupyter notebooks to cloud platforms like AWS and Google Colab, Python works seamlessly everywhere.

What You Can Do with Python in Data Science

Let’s look at real tasks you can perform using Python: TaskPython ToolsData cleaning & manipulationPandas, NumPyData visualizationMatplotlib, Seaborn, PlotlyMachine learningScikit-learn, XGBoostDeep learningTensorFlow, PyTorchStatistical analysisStatsmodels, SciPyBig data integrationPySpark, Dask

Python lets you go from raw data to actionable insight — all within a single ecosystem.

A Beginner's Roadmap to Learn Python for Data Science

If you're starting from scratch, follow this step-by-step learning path:

✅ Step 1: Learn Python Basics

Variables, data types, loops, conditionals

Functions, file handling, error handling

✅ Step 2: Explore NumPy

Arrays, broadcasting, numerical computations

✅ Step 3: Master Pandas

DataFrames, filtering, grouping, merging datasets

✅ Step 4: Visualize with Matplotlib & Seaborn

Create charts, plots, and visual dashboards

✅ Step 5: Intro to Machine Learning

Use Scikit-learn for classification, regression, clustering

✅ Step 6: Work on Real Projects

Apply your knowledge to real-world datasets (Kaggle, UCI, etc.)

Who Should Learn Python for Data Science?

Python is incredibly beginner-friendly and widely used, making it ideal for:

Students looking to future-proof their careers

Working professionals planning a transition to data

Analysts who want to automate and scale insights

Researchers working with data-driven models

Developers diving into AI, ML, or automation

How Long Does It Take to Learn?

You can grasp Python fundamentals in 2–3 weeks with consistent daily practice. To become proficient in data science using Python, expect to spend 3–6 months, depending on your pace and project experience.

The good news? You don’t need to do it alone.

🎓 Learn Python for Data Science – Full Free Course on YouTube

We’ve put together a FREE, beginner-friendly YouTube course that covers everything you need to start your data science journey using Python.

📘 What You’ll Learn:

Python programming basics

NumPy and Pandas for data handling

Matplotlib for visualization

Scikit-learn for machine learning

Real-life datasets and projects

Step-by-step explanations

📺 Watch the full course now → 👉 Python for Data Science Full Course

You’ll walk away with job-ready skills and project experience — at zero cost.

🧭 Final Thoughts

Python isn’t just a programming language — it’s your gateway to the future.

By learning Python for data science, you unlock opportunities across industries, roles, and technologies. The demand is high, the tools are ready, and the learning path is clearer than ever.

Don’t let analysis paralysis hold you back.

Click here to start learning now → https://youtu.be/6rYVt_2q_BM

#PythonForDataScience #LearnPython #FreeCourse #DataScience2025 #MachineLearning #NumPy #Pandas #DataAnalysis #AI #ScikitLearn #UpskillNow

1 note

·

View note

Text

Can I use Python for big data analysis?

Yes, Python is a powerful tool for big data analysis. Here’s how Python handles large-scale data analysis:

Libraries for Big Data:

Pandas:

While primarily designed for smaller datasets, Pandas can handle larger datasets efficiently when used with tools like Dask or by optimizing memory usage..

NumPy:

Provides support for large, multi-dimensional arrays and matrices, along with a collection of mathematical functions to operate on these arrays.

Dask:

A parallel computing library that extends Pandas and NumPy to larger datasets. It allows you to scale Python code from a single machine to a distributed cluster

Distributed Computing:

PySpark:

The Python API for Apache Spark, which is designed for large-scale data processing. PySpark can handle big data by distributing tasks across a cluster of machines, making it suitable for large datasets and complex computations.

Dask:

Also provides distributed computing capabilities, allowing you to perform parallel computations on large datasets across multiple cores or nodes.

Data Storage and Access:

HDF5:

A file format and set of tools for managing complex data. Python’s h5py library provides an interface to read and write HDF5 files, which are suitable for large datasets.

Databases:

Python can interface with various big data databases like Apache Cassandra, MongoDB, and SQL-based systems. Libraries such as SQLAlchemy facilitate connections to relational databases.

Data Visualization:

Matplotlib, Seaborn, and Plotly: These libraries allow you to create visualizations of large datasets, though for extremely large datasets, tools designed for distributed environments might be more appropriate.

Machine Learning:

Scikit-learn:

While not specifically designed for big data, Scikit-learn can be used with tools like Dask to handle larger datasets.

TensorFlow and PyTorch:

These frameworks support large-scale machine learning and can be integrated with big data processing tools for training and deploying models on large datasets.

Python’s ecosystem includes a variety of tools and libraries that make it well-suited for big data analysis, providing flexibility and scalability to handle large volumes of data.

Drop the message to learn more….!

2 notes

·

View notes